Model View Controller Pattern - Brainstorm August 23, 2012

I don't typically know the names of everything I create, even if I copy the concept from another technology. But here's what it has:

I wanted to take some pain out of doing social sharing, so posting items automatically to twitter seemed like a good step to take. Of course, with my paradigm shift into Node.js and MongoDB, and it being fairly new across the board, I would have to write it myself, with the added benefit of being able to share how it was done!

Twitter's API documentation is pretty good, as far as API documentation goes. Their OAuth docs are on par with Google's and Facebook's (I use both of those on the site as well, as they are OAuth 2.0 and 2.0 is generally much easier than 1.0). All of their documentation can be found on dev.twitter.com, and that's also where you would create and manage your app, app keys and secrets, etc.

Now, completely ignoring the rate limiting part of the Twitter API, we can write a pretty straightforward method to send status updates as the user you created the application under. You can get the access tokens right in the Details section of your application. You'll need the access token and access token secret, as well as the consumer key and the consumer secret key. Don't give these to anyone!

The hardest part about OAuth 1.0 is generating the signature correctly. It's not that hard, either, since twitter provides excellent documentation on creating the signature. The base string used for the signature is created like this

These are the only requires you'll require (heh)

var https = require("https"), querystring = require("querystring"), crypto=require("crypto");

var oauthData = { oauth_consumer_key: consumerKey, oauth_nonce: nonce, oauth_signature_method: "HMAC-SHA1", oauth_timestamp: timestamp, oauth_token: accessToken, oauth_version: "1.0" };

var sigData = {};

for (var k in oauthData){

sigData[k] = oauthData[k];

}

for (var k in data){

sigData[k] = data[k];

}

Here we're gathering up all of the data passed to create the tweet (status, lat, long, other parameters), and also the oauthData minus the signature. Then we do this:

var sig = generateOAuthSignature(url.method, "https://" + url.host + url.path, sigData);

oauthData.oauth_signature = sig;

Which calls this function

function generateOAuthSignature(method, url, data){

var signingToken = urlEncode(consumerSecret) + "&" + urlEncode(accessSecret);

var keys = [];

for (var d in data){

keys.push(d);

}

keys.sort();

var output = "POST&" + urlEncode(url) + "&";

var params = "";

keys.forEach(function(k){

params += "&" + urlEncode(k) + "=" + urlEncode(data[k]);

});

params = urlEncode(params.substring(1));

return hashString(signingToken, output+params, "base64");

}

function hashString(key, str, encoding){

var hmac = crypto.createHmac("sha1", key);

hmac.update(str);

return hmac.digest(encoding);

}

With this function, you can successfully generate the signature. The next part is passing the OAuth headers correctly. I simply do this:

var oauthHeader = "";

for (var k in oauthData){

oauthHeader += ", " + urlEncode(k) + "=\"" + urlEncode(oauthData[k]) + "\"";

}

oauthHeader = oauthHeader.substring(1);

And then create the request and pass it along on the request like this:

var req = https.request(url, function(resp){

resp.setEncoding("utf8");

var respData = "";

resp.on("data", function(data){

respData += data;

});

resp.on("end", function(){

if (resp.statusCode != 200){

callback({error: resp.statusCode, message: respData });

}

else callback(JSON.parse(respData));

});

});

req.setHeader("Authorization", "OAuth" + oauthHeader);

req.write(querystring.stringify(data));

req.end();

There are other checks, like when you include a URL in the tweet text, you'll need to see that your text plus the length of the generated t.co URL doesn't exceed 140 characters. That URL length will change fairly infrequently, and slower and slower as time goes on, since more URLs can be generated with just 1 more character added. This data is available though. I have another function that gets the configuration from twitter, and passes that along to my function that actually generates tweets from the database.

function getHttpsNonAuthJSON(host, path, query, callback){

var url = { host: host , path: path };

if (query != null) url.path = url.path + "?" + querystring.stringify(query);

https.get(url, function(resp){

resp.setEncoding("utf8");

var respData = "";

resp.on("data", function(data){

respData += data;

});

resp.on("end", function(){

callback(JSON.parse(respData));

});

});

}

This is a general function to get any non-authenticated https request and perform a callback with a JS object. I might call it like this, for example

getHttpsNonAuthJSON("api.twitter.com", "/1/help/configuration.json", null, function(config){

console.log(config.short_url_length_http);

});

For some completeness, this is the complete function that makes POST requests with OAuth

function postHttpsAuthJSON(host, path, data, nonce, callback){

var url = { host: host, path: path, method: "POST" };

var timestamp = Math.floor(new Date().getTime() / 1000);

var oauthData = { oauth_consumer_key: consumerKey, oauth_nonce: nonce, oauth_signature_method: "HMAC-SHA1", oauth_timestamp: timestamp, oauth_token: accessToken, oauth_version: "1.0" };

var sigData = {};

for (var k in oauthData){

sigData[k] = oauthData[k];

}

for (var k in data){

sigData[k] = data[k];

}

var sig = generateOAuthSignature(url.method, "https://" + url.host + url.path, sigData);

oauthData.oauth_signature = sig;

var oauthHeader = "";

for (var k in oauthData){

oauthHeader += ", " + urlEncode(k) + "=\"" + urlEncode(oauthData[k]) + "\"";

}

oauthHeader = oauthHeader.substring(1);

var req = https.request(url, function(resp){

resp.setEncoding("utf8");

var respData = "";

resp.on("data", function(data){

respData += data;

});

resp.on("end", function(){

if (resp.statusCode != 200){

callback({error: resp.statusCode, message: respData });

}

else callback(JSON.parse(respData));

});

});

req.setHeader("Authorization", "OAuth" + oauthHeader);

req.write(querystring.stringify(data));

req.end();

}

Thanks for reading! I hope this helps you out. A reminder, this is just for posting as a single user, it doesn't go get the auth token or do any other of the handshaking that is needed for OAuth. Twitter generates an access token and a access secret token for use with the user that created the application, and you can do calls to the API with that, as that user.

I refer you to my original post with my SyncArray code

function getSubdirs = function(path, callback){

fs.readdir(path, function(err, files){

var sync = new SyncArray(files);

var subdirs = [];

sync.forEach(function(file, index, array, finishedOne){

fs.stat(file, function(err, stats){

if (stats.isDirectory()){

subdirs.push(file);

}

finishedOne();

});

}, function(){

callback(subdirs);

});

});

}

I updated my JS combiner functionality in the web server with JSMin! I found a Node.js JSMin implementation, added the line of code that minifies my combined file, and writes that out as the file to download. The one instance combined three big jQuery libraries that are used on almost every page, and it went from 37KB to 26KB. Before it was comparable in size to 37KB (probably identical) but it was three calls to the web server. This should limit the calls a ton.

I couldn't think of a good title for this one, but here's what I needed to accomplish: To loop over an array, but not moving onto the next element until finished with the current one. This is simple in synchronous, blocking code, but once you get to the world of Node.js and non-blocking calls going on everywhere, it becomes wildly more difficult. One could add the word "Semaphore" to this post and it wouldn't be too off the wall.

Take the following code for example:

var array = ["www.google.com", "www.yahoo.com", "www.microsoft.com", "www.jasontconnell.com", "givit.me"];

array.forEach(function(d, i){

http.get({ host: d, port: 80, path: "/" }, function(res){

console.log("index " + i + " got the response for " + d);

});

});

Add in the appropriate "require" statements, run the code, and this is what I got the first time I ran it:

index = 0 - got response for www.google.com

index = 3 - got response for givit.me

index = 2 - got response for www.microsoft.com

index = 4 - got response for www.jasontconnell.com

index = 1 - got response for www.yahoo.com

That is not a predictable order! So how do we fix this? EventEmitter!! This took me a surprisingly small amount of time to figure out. Here's the code:

var http = require("http");

var EventEmitter = require("events").EventEmitter;

var sys = require("sys");

function SyncArray(array){ this.array = array };

require("util").inherits(SyncArray, EventEmitter)

SyncArray.prototype.forEach = function(callback, finishedCallback){

var self = this;

this.on("nextElement", function(index, callback, finishedCallback){

self.next(++index, callback, finishedCallback);

});

this.on("finished", function(){

});

self.next(0, callback, finishedCallback);

}

SyncArray.prototype.next = function(index, callback, finishedCallback){

var self = this;

var obj = index < self.array.length ? self.array[index] : null;

if (obj){

callback(obj, index, self.array, function(){

self.emit("nextElement", index, callback, finishedCallback);

});

}

else {

finishedCallback();

self.emit("finished");

}

}

var array = ["www.google.com","www.yahoo.com", "www.microsoft.com", "givit.me", "www.jasontconnell.com"];

var sync = new SyncArray(array);

sync.forEach(function(d, i, array, finishedOne){

http.get({ host: d, port: 80, path: "/" }, function(res){

console.log("index = " + i + " - got response from " + d );

finishedOne();

});

}, function(){

console.log("finished the sync array foreach loop");

});

And the output as we expected:

index = 0 - got response from www.google.com

index = 1 - got response from www.yahoo.com

index = 2 - got response from www.microsoft.com

index = 3 - got response from givit.me

index = 4 - got response from www.jasontconnell.com

finished the sync array foreach loop

Feel free to update this with best practices, better code, better way to do it in Node.js (perhaps built in?), etc, in the comments. I'm really excited about this since holy F#@$ that's been frustrating!

The practice of combining and minifying JS files is one that should be done at all times. There's two ways to do this, one involves creating the combined file manually. The other involves fun and less future work.

I write my JS into many different files, breaking down functionality, but the client doesn't need to see that.

On my web server, I have a server side tag library that allows me to do stuff like include other files (so I can break up development into components), run "foreach" statements over arrays, conditional processing, and other stuff. I've added to this arsenal with a "combine" tag.

It's used like this:

<jsn:combine>

/js/file1.js

/js/file2.js

/js/file3.js

</jsn:combine>

These files get combined and added to the /js/combine/ folder, and the script tag gets written out in the response. This checks each time if one of the js files has been updated since the combined javascript file has been written.

There would be way too much code to spit out to show how all of the tags and the server works, so I'll just show the code that does the combine

var combineRegex = /(.*?\.js)\n/g;

var rootdir = site.path + site.contentRoot;

var combineFolder = "/js/combine/";

var file = combineFolder + jsnContext.localScope + ".js";

var writeTag = function(jsnContext, file){

var scriptTag = "<script type=\"text/javascript\" src=\"" + file + "\"</script>";

jsnContext.write(scriptTag);

callback(jsnContext);

}

var combineStats = filesystem.fileStats(rootdir + file);

var writeFile = combineStats == null;

var altroot = null;

if (site.config.isMobile && site.main != null){

altroot = site.path + site.main.contentRoot;

}

var paths = [];

while ((match = combineRegex.exec(files)) != null){

var localPath = match[1].trim();

var fullPath = rootdir + localPath;

var origStats = filesystem.fileStats(fullPath);

var altStats = filesystem.fileStats(altroot+localPath);

// add paths and determine if we should overwrite / create the combined file

if (origStats != null){

paths.push(fullPath);

writeFile = writeFile || combineStats.mtime < origStats.mtime;

}

else if (altroot != null && altStats != null){

paths.push(altroot+localPath);

writeFile = writeFile || combineStats.mtime < altStats.mtime;

}

}

writeTag(jsnContext, file);

if (writeFile){

filesystem.ensureFolder(rootdir + combineFolder, function(success){

if (success){

filesystem.readFiles(paths, function(content){

filesystem.writeFile(rootdir+file, content, function(success){

});

});

}

});

}

Some other stuff going on... my server has built in mobile site support. You can specify "this is the mobile site for this full site" and "for this mobile site, this is the main site", both running independent but they know of each other. I can have the file /js/mobile-only.js combined on the mobile site, where that file exists in the mobile site's content root, and I can have the file /js/main-site-only.js combined on mobile, where that file only exists on the main site.

Some nice to haves would be to also minify the files on the fly.

Yesterday I lied when I said it would be the last Node.js post for a while! Oh well.

So today I was looking to make my project site a little faster, particularly on the mobile side. Actually this was the last three days worth of trying to figure stuff out. Node.js has plenty of compression library add-ons (modules), but the most standard compression tool out there is gzip (and gunzip). In the Accept-Encoding request header, the browser will tell you whether or not it can handle it. Most can...

This seemed like an obvious mechanism to employ to decrease some page load times... not that it's soo slow, but when the traffic gets up there and the site starts bogging down, at least the network will be less of a bottleneck. Some browsers do not support it, so you always have to send uncompressed content in those cases.

So I found a good Node.js compression module that supported BZ2 as well as gzip. The problem was, it was only meant to work with npm (Node's package manager), which for whatever reason, I've stayed away from. I like to keep my modules organized myself, I guess! So I pull the source from github and build the package if it requires it, then make sure I can use it by just calling require("package-name"); It's worked for every case except the first gzip library I found... doh! Luckily, github is a very social place, and lots of developers will just fork a project and fix it. That was where the magic started. I found a fork of the node-compress that fixed these issues, installed the package correctly by just calling ./build.sh (which calls node-waf, which I'm fine with using!), and copied the binary to the correct location within the module directory. So all I had to do was modify my code to require("node-compress/lib/compress"); I'm fine with that too.

var compress = require("node-compress/lib/compress");

var encodeTypes = {"js":1,"css":1,"html":1};

function acceptsGzip(req){

var url = req.url;

var ext = url.indexOf(".") == -1 ? "" : url.substring(url.lastIndexOf(".")+1);

return (ext in encodeTypes || ext == "") && req.headers["accept-encoding"] != null && req.headers["accept-encoding"].indexOf("gzip") != -1;

}

function createBuffer(str, enc) {

enc = enc || 'utf8';

var len = Buffer.byteLength(str, enc);

var buf = new Buffer(len);

buf.write(str, enc, 0);

return buf;

}

this.gzipData = function(req, res, data, callback){

if (data != null && acceptsGzip(req)){

var gzip = new compress.Gzip();

var encoded = null;

var headers = null;

var buf = Buffer.isBuffer(data) ? data : createBuffer(data, "utf8");

gzip.write(buf, function(err, data1){

encoded = data1.toString("binary");

gzip.close(function(err, data2){

encoded = encoded + data2.toString("binary");

headers = { "Content-Encoding": "gzip" };

callback(encoded, "binary", headers);

});

});

}

else callback(data);

}

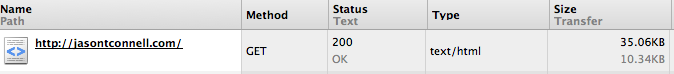

So it's awesome. Here's a picture of the code working on this page

Quite the improvement, at less than 30% of the size! Soon I'm going to work in a static file handler, so that it doesn't have to re-gzip js and css files every request, although I use caching extensively, so it won't have to re-gzip it for you 10 times in a row, only re-gzip it for 10 different users for the first time... I can see that being a problem in the long run, although, it's still fast as a mofo!

Probably my last post about Node.js for a while. My original implementation of the webserver used page objects in the following way:

index.html -> /site/pages/index.js

Meaning that when index.html was requested, index.js was located and executed. A side effect of this, using the node.js construct "require", is that the js page will only be loaded once. Which was bad because I had my code structured in the following way:

//index.js:

this.title = "My page title";

this.load = function(..., finishedCallback){

this.title = figureOutDynamicTitle(parameters);

finishedCallback();

}

Granted, when someone went to index.html, there was a very small time that they might get the wrong title, in this example. But for things, like setting an array on the page to the value loaded from the database, or where you might have another case in the load function where the array doesn't get set at all, there's a very good chance that someone will see something that someone else was only supposed to see.

How I fixed this was to pass back the page object to the finishedCallback. The page object was declared, built and passed back all within the context of the load function, so it never has a chance to be wrong! This is how it looks now

//index.js

this.load = function(..., finishedCallback){

var page = { title: figureOutDynamicTitle(parameters) };

finishedCallback({ page: page });

}

This works. And it's super fast still.

The Node.js process class is very helpful for cleanup purposes. You can imagine, when writing a rudimentary web server, you might also have a mechanism for tracking sessions. This was definitely the case for me, so we can easily keep track of who's logged in, without exposing much in the way of security.

Having not worked in a way to update all of my code without restarting the server, I have to restart the server when a change is made to the code in order for it to update. This in turn, deletes all of the sessions. I was thinking of a way to handle this, knowing of Node.js's process class, but where to put the code was not immediately obvious in my brain, but once I started coding for this exact purpose, it was a shame that I didn't think of it right away, just for the shear fact that I cannot lie, and I could say "Yeah I thought of it right away" :)

Here's the code:

function closeServer(errno){

console.log("Server closing, serializing sessions");

SessionManager.serialize(config.web.global.sessionStore, function(){

server.close();

process.exit(0);

});

}

process.on("SIGTERM", closeServer);

process.on("SIGINT", closeServer);

Luckily, killproc sends SIGTERM, and pressing CTRL+C sends a SIGINT signal. I've noticed kill also just sends SIGTERM, with SIGKILL being a very explicit option, so we may just have to listen for SIGTERM and SIGINT. Anyway.

sessionStore is the folder to store them in, and it clears them out each time and saves what's in memory, so we don't get months old sessions in there.

I just serialize them using JSON.stringify and deserialize with the counter, JSON.parse. It works beautifully.

The session ID is just a combination of the time, plus some user variables like user agent, hashed and hexed. Here's the code that serializes and deserializes.

SessionManager.prototype.serialize = function(path, callback){

var self = this;

fshelp.ensureFolder(path, function(err, exists){

fshelp.clearFolder(path, function(err, exists){

for (id in self.sessions){

if (!self.isExpired(self.sessions[id]))

fs.writeFileSync(path + id, JSON.stringify(self.sessions[id]), "utf8");

}

delete self.sessions;

callback();

});

});

}

SessionManager.prototype.deserialize = function(path, callback){

var self = this;

fshelp.ensureFolder(path, function(err, exists){

fs.readdir(path,function(err, files){

files.forEach(function(d){

var id = d.substring(d.lastIndexOf("/") + 1);

self.sessions[id] = JSON.parse(fs.readFileSync(path + d, "utf8"));

});

callback();

});

});

}

Now no one gets logged out when I have to restart my server for any reason!

Since all of this takes place at the end or the beginning, I figured to save some brain power and just use the synchronous operations for writing and reading the files.

As you can see. Very simple, no images, but the bells and whistles are there. I wrote it up over a weekend, probably 6-7 hours total, converting the original mysql database over to mongodb, and writing some quick code in node.js.

There's only one table, posts, so it was easy. There is a mechanism for me to edit the posts, and add new ones, but it's very crude!

Take it easy. Peace.

In my many years of web development, I've come across a lot of good ways that platform authors did stuff, and a lot of bad ways. So I'm writing my version of a web platform on Node.js, and I decided to keep the good stuff, and get rid of what I didn't like. It wasn't easy but I'm pretty much finished by now.

As with most things I develop, I'll decide on an architecture that allows for changes to be made in a way that makes sense, but I'll start with what I want the code to look like. Yes. When I wrote my ORM, I started with the simple line, db.save(obj); (it turns out that's how you do it in MongoDB so I didn't have to write an ORM with Mongo :) When starting a web platform, I started out the same way.

I wanted to write:<list value="${page.someListVariable}" var="item">

Details for ${item.name}

<include value="/template/item-template.html" item="item" />

</list>

Obvious features here are code and presentation separation, SSIs, simple variable replacement with ${} syntax.

There aren't a lot of tags in my platform. There's an if, which you can use to decide whether to output something. There's an include, which you can pass variables from the main page so you can reuse it on many pages. This one takes an "item" object, which it will refer to in its own code with ${item}.

Recently I added a layout concept. So you can have your layout html in another file, and just put things into the page in the page's actual html. For instance, you might reach the file index.html, which would look like this:<layout name="main">

<content name="left-column">

<include value="/template/navigation.html" />

</content>

<content name="main-column">

<include value="/template/home-content.html" />

</content>

</layout>

Java Server Faces used a two way data binding mechanism which was really helpful. But then you need controls, like input[type=text] or whatever. My pages will not have two way data binding, but you can use plain html. Which I like better. (However, those controls were very simple to swap due to the generous use of interfaces by Java, and their documentation pretty much mandating their use. e.g. using ValueHolder in Java instead of TextBox, and if you were to make it a "select" or input[type=hidden], your Java code would not have to change, which is one thing I absolutely hate about ASP.NET).

I borrow nothing from PHP.

ASP.NET pretty much does nothing that I like, other than it's easy to keep track of what code gets run when you go to /default.aspx. The code in /default.aspx.cs and whatever Page class that inherits, or master page that it's on. In Java Server Faces you're scrounging through xml files to see which session bean got named "mybean".

My platform is similar to ASP.NET in that for /index.html there's a /site/pages/index.js (have I mentioned that it's built on node.js), that can optionally exist, and can have 1-2 functions implemented in it, which are "load" and "handlePost", if your page is so inclined to handle posts. Another option is to have this file exist, implement neither load nor handlePost, and just have properties in it. It's up to youme.

Here's a sample sitemap page for generating a Google Sitemap xml file:

Html:<!--?xml version="1.0" encoding="UTF-8"?-->

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>http://${config.hostUrl}/index</loc>

<lastmod>2011-06-16</lastmod>

<changefreq>monthly</changefreq>

<priority>0.5</priority>

</url>

<jsn:foreach value="${page.entries}" var="entry">

<url>

<loc>${entry.loc}</loc>

<lastmod>${entry.lastmod}</lastmod>

<changefreq>${entry.changefreq}</changefreq>

<priority>${entry.priority}</priority>

</url>

</jsn:foreach>

</urlset>

I use the jsn prefix, which just stands for (now, anyway) Javascript Node. I wasn't creative. I guess I can call it "Jason's Site N..." I can't think of an N.

And the javascript:var date = require("dates"), common = require("../common");

this.entries = [];

this.load = function(site, query, finishedCallback){

var self = this;

var now = new Date(Date.now());

var yesterday = new Date(now.getFullYear(), now.getMonth(), now.getDate());

var yesterdayFormat = date.formatDate("YYYY-MM-dd", yesterday);

common.populateCities(site.db, function(states){

for (var i = 0; i < states.length; i++){

states[i].cities.forEach(function(city){

var entry = {

loc: "http://" + site.hostUrl + "/metro/" + city.state.toLowerCase() + "/" + city.key,

lastmod: yesterdayFormat,

changefreq: "daily",

priority: "1"

}

self.entries.push(entry);

});

}

finishedCallback({contentType: "text/xml"});

});

}

My finishedCallback function can take more parameters, say for handling a JSON request, I could add {contentType: "text/plain", content: JSON.stringify(obj)}.

That's about all there is to it! It's pretty easy to work with so far :) My site will launch soon!

This can lead to some pretty sweet code. For one thing, always add a callback as a parameter to functions you create, to keep with the non-blocking nature. The next thing you need to know is that you will back yourself into a corner!

Take the following code:

collection.find(search, {sort: sort}, function(err, cursor){

cursor.toArray(function(err, messages){

for (var i = 0; i < messages.length; i++){

db.dereference(messages[i].from, function(err, result){

messages[i].from_deref = result;

});

}

callback(messages);

});

});

Backstory: I'm using MongoDB as the backend, I have a message collection, a user collection, and messages have a "from" property that is a DBRef to a user.

You would run this code and find that if you had any number of messages greater than zero, you will probably get a null "from_deref" object, which means the callback at the end was called before it was finished processing. That is if you're lucky enough to not get an error stating that the code "can't set the property from_deref of undefined", which means, usually, that "i" is null or greater than the length of the array by the time the callback for db.dereference calls. If it's not obvious, I'm dereferencing the user's DBRef and storing it in the message's from_deref property.

This is because of the non-blocking nature of Node.js. It's interesting because it makes me think in new ways. Anything that makes you think differently is good in my opinion. So how do we accomplish this and not break anything? Consider the following code as a solution:collection.find(search, {sort: sort}, function(err, cursor){

cursor.toArray(function(err, messages){

var process = messages.length - 1;

for (var i = 0; i < messages.length; i++){

(function(messages, index){

db.dereference(messages[index].from, function(err, result){

messages[index].from_deref = result;

if (index == process)

callback(messages);

});

})(messages, i);

}

if (messages.length == 0) callback(messages);

});

});

Javascript is awesome. This is basically an anonymous function that I define and call in the same block. The definition is everything inside (function(x,y){}) and the call is in the parentheses following: (messages, i); So this calls the inner block with the value of i that I'm hoping it will (or rather than hoping, I'm confident it will!). And when all dereferences are done, I know that the process variable will be equal to the index (process variable is length - 1 which is the max value the index can have).

Of course, this doesn't take advantage of the node-mongodb-native's library of the nextObject function on the cursor object. That would totally solve this without javascript magic:cursor.nextObject(function(err, message){

db.dereference(message.from, function(err, result){

message.from_deref = result;

});

});

However, I like the Array...

So there you have it.

Image processing is very important if you are going to allow anyone, even if it's only you, to upload images to your site. The fact of the matter is, not everyone knows how to resize an image, and forcing users to do it will mean less user submitted content on your site, since it's a pain, and other sites let you upload non-processed images. If it's just you uploading images, laziness will take over and you will stop doing it because it's not easy. So get with the times!

I found a Node.js plugin for GraphicsMagick. I learned about both the plugin and GraphicsMagick itself simultaneously. GraphicsMagick is pretty sweet, minus setting it up. After you get it set up though, you can perform many operations on any image format that you configured within GraphicsMagick.

This post will not cover setting up GraphicsMagick (although I will point out that setting LDFLAGS=-L/usr/local/include in my instance saved me from problems with missing LIBPNGxxx.so files), and I couldn't get it to work on my Mac. Here's how I'm using GraphicsMagick, via the excellent Node.js module, gm.var gm = require("gm"), fs = require("fs");

var basePath = "/path/to/images/";

var maxDimension = 800;

var maxThumbDimension = 170;

var thumbQuality = 90;

var processImages = function(images){

console.log("processing : " + images.length + " image(s)");

images.forEach(function (image, imageIndex){

var fullPath = basePath + image;

var newFilename = basePath + "scaled/" + image;

gm(fullPath).size(function(err, value){

var newWidth = value.width, newHeight = value.height, ratio = 1;

if (value.width > maxDimension || value.height > maxDimension){

if (value.width > maxDimension){

ratio = maxDimension / value.width;

}

else if (value.height > maxDimension){

ratio = maxDimension / value.height;

}

newWidth = value.width * ratio;

newHeight = value.height * ratio;

}

if (newWidth != value.width){

console.log("resizing " + image + " to " + newWidth + "x" + newHeight);

gm(fullPath).resize(newWidth, newHeight).write(newFilename, function(err){

if (err) console.log("Error: " + err);

console.log("resized " + image + " to " + newWidth + "x" + newHeight);

});

}

else copyTheFileToScaledFolder(); // ?? how do you do this?!? :P

}

}

}

I run this as a service instead of putting it in the web application. It is on an interval, and you just let node.js handle it! That part was simple:var interval = setInterval(function(){ processImages(getImages());}, 4000);

Your getImages function might look like this:var getImages = function(){

fs.readdir(basePath, function(err, files){

// this won't work...

// filter out folders, non-image files and files that have already been processed

processImages(files);

// maybe delete these images so you don't have to keep track of previously processed images

});

}

This is not how my code works, since my images are in a MongoDB database and my document has a "resizeImages" boolean property on it, to trigger this to get images to resize. So I don't know if it will work, or what the fs.readdir sends in its files argument on the callback! But you can try :)

With GraphicsMagick, you could also change the format of the image, if you were a stickler and wanted only PNG or JPG files. You can apply filters like motion blur, or transforms like rotation, add text, etc. It is pretty magical...

I am starting a joint venture with my very good friend, where I am doing the coding, and I decided that I will be doing it in Node.js with a MongoDB backend. I was thinking about why these two technologies, and how I would explain my choices to another techy. I think I have my explanation figured out...

First and foremost, Javascript. I have come to love everything about it! For my side projects, I used to use Java, and wrote a pretty decent web middle tier and rolled my own ORM also. You are witnessing it in action by reading this post. I could make an object like "Book", add properties to it, give them properties in an XML file (a series of them, if you know how Java web apps work ;), and my ORM would create the table, foreign keys, etc. It was particularly magical in how it handled foreign keys and loading referenced objects in one SQL query, knowing which type of join to apply and everything. However, this was all a daunting task, made even more so by the fact that Java allows abstraction to the Nth degree. If I could remember that code, I would be very much more specific on how it worked, but I had an object for connecting to the database, an object in another project for building SQL because I thought I could abstract that out and rewrite it if I had to, and swap in SQL building engines. That was the main cause of pain, I would think "If I just wanted to swap in another X, it would be easy." I initially made plans to have it either go to SQL or XML or JSON, whatever you wanted, and you would just swap in an engine in the config file. It was heavily reflection based :)

So, I've been writing Javascript a lot. Only lately, but before discovering Node.js, have I come to realize its power... anonymous methods and objects, JSON, Functions as first class citizens. Of course there are the libraries, like jQuery, and Google APIs, like maps for instance. There's the shear fact that you don't have to think ahead about every possibility for an object before creating it. Like, my Book object would have an author, title, etc. If later I wanted to add the ISBN or something, in Java I would have to update any IReadable interfaces (well, considering that you could read a cereal box that would undoubtedly NOT have an ISBN, this example is falling apart, but you know what I'm talking about :P ), then update the Book class, update the list to enable searching by an ISBN, etc. Tons of stuff. Javascript:

var Book = function(opt) { for (var i in opt) { this[i] = opt[i]; } }

Imagine I start calling it with

var book = new Book({title: "Brainiac", author: "Ken Jennings", ISBN: "some string of numbers});

I can now just get the ISBN in other places by calling "book.ISBN".